What if you lost your voice—but not your thoughts? For many people suffering from severe paralysis or neurological conditions like ALS or stroke, the biggest challenge isn’t forming words in the brain. It’s getting them out.

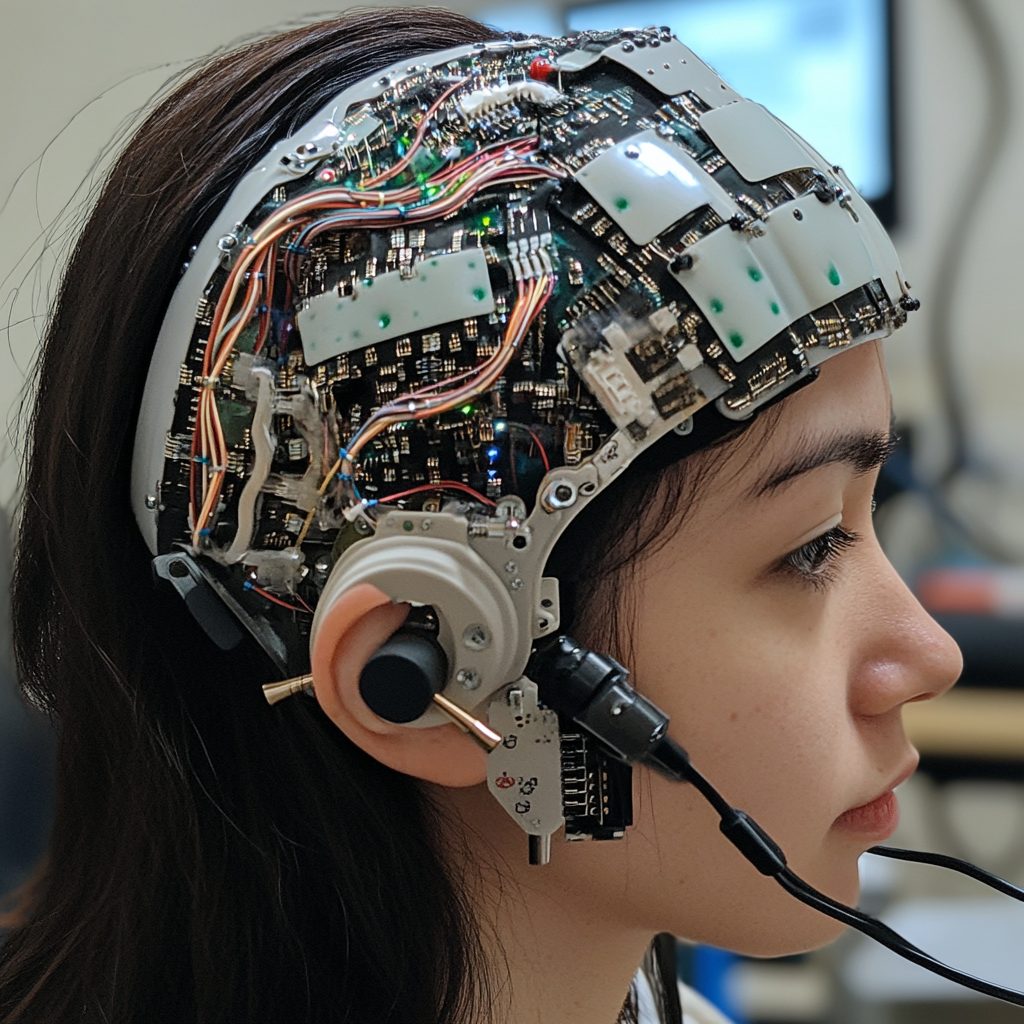

Now, a groundbreaking project at UC Berkeley has turned this science fiction into reality. Researchers have developed a brain-to-voice neuroprosthesis that translates brain signals directly into speech—complete with tone, rhythm, and emotion. This isn’t robotic, monotone speech. It’s a near-natural voice, personalized to sound like the individual before they lost their ability to speak.

“The dream is to restore the full, embodied experience of communication,” said Gopala Anumanchipalli, assistant professor of electrical engineering and computer sciences at UC Berkeley.

What’s the Breakthrough Here?

Let’s cut to the chase: This is the first time that brain signals have been used to generate a natural-sounding voice that mimics a person’s original speech patterns. The system captures the intended vocal tract movements (even if your mouth and tongue can’t move), converts those signals into realistic sounds, and reproduces them in a way that listeners can understand—and emotionally connect with.

Researchers trained the AI system using brain activity from a volunteer who could still speak. They used high-resolution brain imaging tools and machine learning models to map out which brain signals corresponded to which sounds and how they were supposed to be formed by the vocal tract.

After that, the speech synthesis model was able to generate voice directly from brain activity—even when the person wasn’t speaking aloud. Just thinking about saying something was enough.

Why This Matters

People with conditions like ALS often rely on text-to-speech devices. These are slow, robotic, and emotionally flat. They don’t allow people to truly express themselves. This new neuroprosthesis is a leap toward restoring real human interaction.

Also, the AI behind this isn’t limited to just voice synthesis. The team believes it could eventually lead to full, fluid conversations between people who have lost the physical ability to speak and their loved ones. If you’d like to dive deeper, check out the original story on UC Berkeley’s Engineering site.

Q&A: What People Want to Know

Q: Can this brain-to-voice tech help people who were never able to speak in the first place?

Right now, the system relies on training data from people who have spoken before, so it’s not yet usable for those who were born mute or never developed speech. But researchers hope to adapt the model to work with imagined speech patterns too—something they’re actively exploring.

Q: Is this technology available for everyday use yet?

Not yet. The current setup still requires invasive brain implants and specialized lab equipment. However, the success of this project is a major step toward making more accessible versions in the future.

Got thoughts? Share them in the comments—we’d love to hear from you.

Want more updates like this?

Sign up for our AI Newsletter – it’s where curiosity meets cutting-edge, and you won’t want to miss what’s next.

If you’re exploring how AI can drive real results for your team, our AI Consultancy Services might be exactly what you need — check it out now!